Manual:Xen

Xen Virtualization Overview

XEN is discontinued since version 4.4

Virtualization techonogies enable single physical device to execute multiple different operating systems. Virtualization support in RadioOS allows to run multiple copies of RadioOS sofware and even other supported operating systems. Note that virtualization support depends on system architecture, not all architectures that RadioOS supports allow virtualization.

Ability to run non-RadioOS sofware allows user to run applications that are not included in RadioOS.

Xen is the RadioOS Virtualization system for X86 machines, Xen is based on Xen Virtual machine of Linux.

x86 Virtualization Support

Virtualization support on x86 architecture systems is implemented using Xen hypervisor (http://www.xen.org). This enables RadioOS to run other operating systems that support Xen paravirtualization in "virtual machines" (guests), controlled by RadioOS software (host).

Support for virtualization for x86 architecture systems is included in RadioOS software versions starting with 3.11. To enable virtualization support "xen" package must be installed.

Host RadioOS software sets up virtual machines such that they use file in RadioOS host file system as disk image(s). Additionally host RadioOS can set up virtual ethernet network interfaces between itself and virtual machine. This enables virtual machines to participate in network under control of host RadioOS software.

In order to execute operating system in virtual machine, you need:

- OS kernel that supports Xen paravirtualization

- OS disk image

- (optionally) initial ram disk to use while booting OS in VM

If RadioOS image is used for booting in VM, OS kernel and initial ram disk are not necessary - specifying RadioOS disk image is sufficient. RadioOS images for use by VMs can be created in 2 ways:

- either by taking image from existing RadioOS x86 installation that supports virtualization (version >= 3.11)

- or by using special RadioOS functions to create RadioOS image to use in VM (note that these functions do not produce RadioOS image that can be copied and successfully run from physical media!).

The latter approach is more flexible because allows user to specify disk image size.

To be able to run non-RadioOS operating system in VM, you need Linux kernel, disk image and initial ram disk (if necessary) files.

Note that one disk image at the same time can only be used by one VM.

Creating RadioOS image to use in VM

To create RadioOS image to use in VM use "/xen make-RadioOS-image" command:

[admin@CableFree] /xen> make-RadioOS-image file-name=ros1.img file-size=32 [admin@CableFree] /xen> /file print # NAME TYPE SIZE CREATION-TIME 0 ros1.img .img file 33554432 jun/06/2008 14:47:23

This produces 32MB RadioOS image that is ready to use in VM. New RadioOS image is based on host system sofware and therefore contains all sofware packages that are installed on host system, but does not contain host configuration.

Additionally, "make-RadioOS-image" has "configuration-script" file parameter that can be used to put on initial configuration script in created image. The script will be run on first boot of image.

VM Configuration

All virtualization for x86 architecture related functions are configured under "/xen" menu.

Memory Available to Host RadioOS

By default all the memory is available to host system, for example for system with 1GB of memory:

[admin@CableFree] > /system resource print

uptime: 2m4s

version: "3.9"

free-memory: 934116kB

total-memory: 963780kB

cpu: "Intel(R)"

cpu-count: 2

cpu-frequency: 2813MHz

cpu-load: 0

free-hdd-space: 77728884kB

total-hdd-space: 79134596kB

write-sect-since-reboot: 989

write-sect-total: 989

architecture-name: "x86"

board-name: "x86"

[admin@CableFree] > /xen global-settings print

memory-for-main: unlimited

In some cases this may limit ability to allocate necessary memory for running guest VMs, because host system may have used memory for e.g. filesystem caching purposes. Therefore it is advised to configure limit of memory available to host system (exact value for limit depends on what sofware features are used on host system - in general, the same rules as for choosing amount of physical memory for regular RadioOS installation apply):

[admin@CableFree] > /system resource print

uptime: 2m4s

version: "3.9"

free-memory: 934116kB

total-memory: 963780kB

cpu: "Intel(R)"

cpu-count: 2

cpu-frequency: 2813MHz

cpu-load: 0

free-hdd-space: 77728884kB

total-hdd-space: 79134596kB

write-sect-since-reboot: 989

write-sect-total: 989

architecture-name: "x86"

board-name: "x86"

[admin@CableFree] > /xen global-settings print

memory-for-main: unlimited

[admin@CableFree] > /xen global-settings set memory-for-main=128

[admin@CableFree] > /system reboot

Reboot, yes? [y/N]:

y

system will reboot shortly

....

[admin@CableFree] > /system resource print

uptime: 1m5s

version: "3.11"

free-memory: 114440kB

total-memory: 131272kB

cpu: "Intel(R)"

cpu-count: 2

cpu-frequency: 2813MHz

cpu-load: 0

free-hdd-space: 77728884kB

total-hdd-space: 79134596kB

write-sect-since-reboot: 794

write-sect-total: 794

architecture-name: "x86"

board-name: "x86"

Creating RadioOS VM

Assuming that RadioOS image "ros1.img" is previously made, new VM to run RadioOS can be created:

[admin@CableFree] /xen> add name=ros1 disk-images=hda:ros1.img memory=64 console-telnet-port=64000

[admin@CableFree] /xen> print detail

Flags: X - disabled, C - configuration-changed

0 X name="ros1" disk-images="hda:ros1.img" initrd="" kernel="" kernel-cmdline="" cpu-count=1 memory=64 weight=256

console-telnet-port=64000 state=disabled

The following parameters were passed to "add" command:

- disk-images=hda:ros1.img - these parameters specify that file "ros1.img" in host filesystem will be set up as disk "hda" (IDE Primary Master) in guest system;

- memory=64 - this specifies amount of memory for guest VM;

- console-telnet-port=64000 - specifies that host system will listen on port 64000 and once telnetted to, will forward guests console output to telnet client and accept console input from telnet client.

There are few other settings:

- kernel & initrd - VM kernel file to boot and initial ram disk file to use (if specified), as noted before, specifying these is not necessary when booting RadioOS image;

- kernel-cmdline - command line to pass to Linux kernel

- cpu-count - how many CPUs should be made available to VM;

- weight - proportional "importance" of this VM when scheduling multiple VMs for execution. Taking into account that host operating system shares CPUs with all running guest VMs, weight parameter specifies proportional share of CPU(s) that guest operating system will get when multiple operating systems start competing for CPU resource. "Weight" of host operating system is 256. So, for example, if guest VM is also configured with weight 256, if both OSes will be running at 100% CPU usage, both will get equal share of CPU. If guest VM will be configured with weight 128, it will get only 1/3 of CPU.

Starting, Stopping and Connecting to RadioOS VM

To start booting guest VM, enable it:

[admin@CableFree] /xen> enable ros1 [admin@CableFree] /xen> print Flags: X - disabled, C - configuration-changed # NAME MEMORY WEIGHT STATE 0 ros1 64 256 running

There are 2 (mutually exclusive, because there is just one virtual console provided for guest VM) ways to connect to console of running VM:

- by using "/xen console <VM name>" command, or

- by using telnet program and connecting to port specified in "console-telnet-port" parameter.

There are multiple ways to stop running VM:

- preferred way is to shut down from guest VM (e.g. by connecting to guest VM, logging in and issuing "/system shutdown" command).

- force shutdown from host RadioOS by using "/xen shutdown <VM name>" command;

- simply by disabling VM entry in "/xen" menu, note that this is the most dangerous way of stopping running VM, because guest VM can leave its filesystem in corrupt state (disabling VM entry for VM is the same as unplugging power for physical device).

VM shutdown state can be confirmed in "/xen" menu:

[admin@CableFree] /xen> shutdown ros1 [admin@CableFree] /xen> print Flags: X - disabled, C - configuration-changed # NAME MEMORY WEIGHT STATE 0 ros1 64 256 shutdown

In order to boot VM that is shut down, you must either disable and enable VM entry in "/xen" menu or use "/xen start <VM name>" command.

There is also "/xen reboot <VM name>" command, that can be used to restart running guest VM, but it must be taken into account that using this command is dagerous - although it instructs guest VM to reboot, in most cases it does not cause guest to flush its filesystem and terminate correctly.

If any guest VM related settings are changed for VM entry in "/xen" menu, if guest VM is running, those settings are not applied immediately (because that would involve destroying VM and starting it again). Instead, VM is marked as "configuration-changed" and new settings will be applied on next reboot. For example:

[admin@CableFree] /xen> print Flags: X - disabled, C - configuration-changed # NAME MEMORY WEIGHT STATE 0 ros1 64 256 running [admin@CableFree] /xen> set ros1 memory=32 [admin@CableFree] /xen> print Flags: X - disabled, C - configuration-changed # NAME MEMORY WEIGHT STATE 0 C ros1 32 256 running [admin@CableFree] /xen> shutdown ros1 [admin@CableFree] /xen> print Flags: X - disabled, C - configuration-changed # NAME MEMORY WEIGHT STATE 0 ros1 32 256 shutdown [admin@CableFree] /xen> start ros1 [admin@CableFree] /xen> print Flags: X - disabled, C - configuration-changed # NAME MEMORY WEIGHT STATE 0 ros1 32 256 running

After this command sequence memory of running guest is actually 32Mb.

Reconfiguring RadioOS VM Image

With "/xen reconfigure-RadioOS-image", RadioOS configuration from existing RadioOS image can be wiped out and new configuration script put on (the script will be executed when VM using this image will next get started):

[admin@CableFree] /xen> reconfigure-RadioOS-image file-name=ros1.img configuration-script=script.file

Configuring VM Networking

In order for guest VM to participate in network, virtual interfaces that connect guest VM with host must be created. Virtual network connection with guest VM can be thought of as point-to-point ethernet network connection, which terminates in guest VM as "/interface ethernet" type interface and in host as "/interface virtual-ethernet" interface. By configuring appropriate data forwarding (either by bridging or routing) to/from virtual-ethernet interface in host system, guest VM can be allowed to participate in real network.

Configuring Network Interfaces for Guest VM

Network interfaces that will appear in guest VM as ethernet interfaces are configured in "/xen interface" menu:

[admin@CableFree] /xen interface> add virtual-machine=ros1 type=dynamic

[admin@CableFree] /xen interface> print detail

Flags: X - disabled, A - active

0 virtual-machine=ros1 vm-mac-addr=02:1C:AE:C1:B4:B2 type=dynamic static-interface=none

dynamic-mac-addr=02:38:19:0C:F3:98 dynamic-bridge=none

Above command creates interface for guest VM "ros1" with type "dynamic".

There are 2 types of interfaces:

- dynamic - endpoint of virtual network connection in host ("/interface virtual-ethernet") will be created dynamically when guest VM will be booted. By using this type of interface user avoids manually creating endpoint of virtual connection in host, at the expense of limited flexibility how this connection can be used (e.g. there is no way how to reliably assign IP address to dynamically created interface). Currently, it can only be automatically added to bridge specified in "dynamic-bridge" parameter. This behaviour is similar to dynamic WDS interfaces for wireless WDS links.

- static - endpoint of virtual network connection in host ("/interface virtual-ethernet") must be manually created. This type of interface allows maximum flexibility because interface that will connect with guest VM is previously known (therefore IP addresses can be added, interface can be used in filter rules, etc.), at the expense of having to create "/interface virtual-ethernet" manually.

VM interfaces have the following parameters:

- virtual-machine - to which VM this interface belongs;

- vm-mac-addr - MAC address of ethernet interface in guest system;

- type - interface type as described above

- static-interface - when "type=static", this parameter specifies which "/interface virtual-ethernet" in host system will be connected with guest;

- dynamic-mac-addr - when "type=dynamic", automatically created "/interface virtual-ethernet" in host system will have this MAC address;

- dynamic-bridge - when "type=dynamic", dynamically created "/interface virtual-ethernet" will automatically get added as bridge port to this bridge.

Configuring Dynamic Interfaces

To create virtual connection that will have its endpoint in host dynamically made, use the following command:

[admin@CableFree] /xen interface> add virtual-machine=ros1 type=dynamic [admin@CableFree] /xen interface> print detail Flags: X - disabled, A - active 0 virtual-machine=ros1 vm-mac-addr=02:1C:AE:C1:B4:B2 type=dynamic static-interface=none dynamic-mac-addr=02:38:19:0C:F3:98 dynamic-bridge=none

After enabling "ros1" VM, you can confirm that new virtual-ethernet interface is made with given dynamic-mac-addr:

[admin@CableFree] /xen> /interface virtual-ethernet print Flags: X - disabled, R - running # NAME MTU ARP MAC-ADDRESS 0 R vif1 1500 enabled 02:38:19:0C:F3:98

And in guest VM ethernet interface is available with given vm-mac-addr:

[admin@Guest] > int ethernet print Flags: X - disabled, R - running, S - slave # NAME MTU MAC-ADDRESS ARP 0 R ether1 1500 02:1C:AE:C1:B4:B2 enabled

By configuring "dynamic-bridge" setting, virtual-ethernet interface can be automatically added as bridge port to some bridge in host system. For example, if it is necessary to forward traffic between "ether1" interface on host and VM "ros1" ethernet interface, the following steps must be taken:

Create bridge on host system and add "ether1" as bridge port:

[admin@CableFree] > /interface bridge add name=to-ros1 [admin@CableFree] > /interface bridge port add bridge=to-ros1 interface=ether1

Next, specify that virtual-ethernet should automatically get added as bridge port:

[admin@CableFree] /xen interface> print detail Flags: X - disabled, A - active 0 A virtual-machine=ros1 vm-mac-addr=02:1C:AE:C1:B4:B2 type=dynamic static-interface=none dynamic-mac-addr=02:38:19:0C:F3:98 dynamic-bridge=none [admin@CableFree] /xen interface> set 0 dynamic-bridge=to-ros1

After this virtual-ethernet interface is added as bridge port on host:

[admin@CableFree] /xen interface> /interface bridge port print Flags: X - disabled, I - inactive, D - dynamic # INTERFACE BRIDGE PRIORITY PATH-COST HORIZON 0 ether1 to-ros1 0x80 10 none 1 D vif1 to-ros1 0x80 10 none

By using similar configuration, user can, for example, "pipe" all traffic through guest VM - if there are 2 physical interfaces in host, user can create 2 bridges and bridge all traffic through guest VM (assuming that operating system in guest is configured in such a way that ensures data forwarding between its interfaces).

Configuring Static Interfaces

To create virtual connection whose endpoint in host system will be static interface, at first create static virtual-ethernet interface:

[admin@CableFree] /interface virtual-ethernet> add name=static-to-ros1 disabled=no [admin@CableFree] /interface virtual-ethernet> print Flags: X - disabled, R - running # NAME MTU ARP MAC-ADDRESS 0 R vif1 1500 enabled 02:38:19:0C:F3:98 1 static-to-ros1 1500 enabled 02:3A:1B:DB:FC:CF

Next, create interface for guest VM:

[admin@CableFree] /xen interface> add virtual-machine=ros1 type=static static-interface=static-to-ros1 [admin@CableFree] /xen interface> print Flags: X - disabled, A - active # VIRTUAL-MACHINE TYPE VM-MAC-ADDR 0 A ros1 dynamic 02:1C:AE:C1:B4:B2 1 A ros1 static 02:DF:66:CD:E9:74

Now we can confirm that virtual-ethernet interface is active:

[admin@CableFree] /xen interface> /interface virtual-ethernet print Flags: X - disabled, R - running # NAME MTU ARP MAC-ADDRESS 0 R static-to-ros1 1500 enabled 02:3A:1B:DB:FC:CF 1 R vif1 1500 enabled 02:38:19:0C:F3:98

And in guest system:

[admin@Guest] > /interface ethernet print Flags: X - disabled, R - running, S - slave # NAME MTU MAC-ADDRESS ARP 0 R ether1 1500 02:1C:AE:C1:B4:B2 enabled 1 R ether2 1500 02:DF:66:CD:E9:74 enabled

Having static interface in host system allows to use interface in configuration wherever specifying interface is necessary, e.g. adding ip address:

[admin@CableFree] > ip address add interface=static-to-ros1 address=1.1.1.1/24

In similar way we add IP address to appropriate interface in guest system and confirm that routing is working:

[admin@Guest] > /ip address add interface=ether2 address=1.1.1.2/24 [admin@Guest] > /ping 1.1.1.1 1.1.1.1 64 byte ping: ttl=64 time=5 ms 1.1.1.1 64 byte ping: ttl=64 time<1 ms 1.1.1.1 64 byte ping: ttl=64 time<1 ms 3 packets transmitted, 3 packets received, 0% packet loss round-trip min/avg/max = 0/1.6/5 ms

Running non-RadioOS Systems as Guests

Xen hypervisor based virtualization for x86 architectures that is used in RadioOS allow to run other operating systems that use Linux kernel that has Xen guest support (DomU support in Xen terminology).

In order to run non-RadioOS system as guest on RadioOS host, you need:

- OS image file

- OS Linux kernel file

- optionally initial ram disk file

There are several ways how to prepare necessary files:

- using already prepared, ready to boot images (the easiest way);

- installing operating system along with necessary virtualization packages and using image from installed system (medium complexity);

- installing operating system, taking image, recompiling Linux kernel, adjusting system to boot under Xen hypervisor (the most complex).

Using Ready to Boot Image

If you have operational Debian GNU/Linux based system with Xen installed, you can use xen-tools scripts (http://www.xen-tools.org) to create/install images and use Xen kernel and initrd from your distribution. Another opportunity is to use already prepared images that are available for download, for example from http://jailtime.org. Note that images do not contain the kernel itself, therefore these images can only be used after taking appropriate kernel and initrd file from real distribution.

Additionally, here we provide some sets of files ready to use in guest VMs.

ClarkConnect 4.2 Community Edition SP1 Image

Image is prepared from installation ISO (ftp://ftp.clarkconnect.com/4.2/iso/community-4.2.SP1.iso) and additional Xen kernel package (ftp://ftp.clarkconnect.com/4.2/other/kernel-xen-2.6.18-8.1.14.3.cc.i686.rpm). Minimum software is installed.

Archive contains the following files:

- kernel: vmlinuz-2.6.18-8.1.14.3.ccxen

- initial RAM disk: clark.initrd.rgz, clark.otherinitrd.rgz (either one can be used)

- disk image: clark.img

To use this image in guest VM under RadioOS (remember that you have to upload files from archive to your RadioOS host), use the following command:

[admin@CableFree] /xen> add disk=hda disk-image=clark.img initrd=clark.otherinitrd.rgz kernel=vmlinuz-2.6.18-8.1.14.3.ccxen kernel-cmdline="root=/dev/hda1" memory=128 name=clark

Password for root user is rootroot.

CentOS 5.1 Image

Image is prepared using netinstall ISO (ISO file: CentOS-5.1-i386-netinstall.iso, available from mirrors listed at http://isoredirect.centos.org/centos/5/isos/i386/) and network based installation. Minimum software is installed.

Archive contains the following files:

- kernel: vmlinuz-2.6.18-53.el5xen

- inital RAM disk: centos.initrd.rgz

- disk image: centos.img

To use this image in guest VM under RadioOS (remember that you have to upload files from archive to your RadioOS host), use the following command:

[admin@CableFree] /xen> add disk=hda disk-image=centos.img initrd=centos.initrd.rgz kernel=vmlinuz-2.6.18-53.el5xen kernel-cmdline="ro root=/dev/hda1" memory=512 name=centos

Password for root user is rootroot.

Installing OS with Virtualization Support

One of ways to simplify OS installation is to install it in image file using some full virtualization software, like VMWare or QEMU. This allows to produce ready to use image file and does not require any additional hardware.

Example: Preparing ClarkConnect Community Edition 4.2 SP1 Image

Below find instructions on how to get ClarkConnect 4.2 Community Edition run as guest VM. Note that ClarkConnect installation does not provide support for virtualization by default, therefore additional tweaks will be necessary.

Installing ClarkConnect

At first, create image where ClarkConnect will be installed:

~/xen$ qemu-img create clark.img 1Gb Formatting 'clark.img', fmt=raw, size=1048576 kB

Next, start installation from ClarkConnect installation ISO image:

~/xen$ sudo qemu -hda clark.img -cdrom community-4.2.SP1.iso -net nic,vlan=0,macaddr=00:01:02:03:04:aa -net tap,vlan=0,ifname=tap0 -m 128 -boot d

Proceed with installation, creating one root partition and (optionally) swap space. Take into account disk size when selecting software packages to install. In this example disk is partitioned with 800MB root partition size and the rest of image for swap. Note that QEMU is instructed to emulate ethernet card, during installation this card is configured with IP address 10.0.0.23/24.

ClarkConnect installation does not provide support for virtualization by default, therefore virtualization support will have to be added manually. ClarkConnect distributes Xen-aware kernel package separately from installation, available at: ftp://ftp.clarkconnect.com/4.2/other/kernel-xen-2.6.18-8.1.14.3.cc.i686.rpm

In order to install this package we have to put it on newly created image. To do this, boot new image:

~/xen$ sudo qemu -hda clark.img -net nic,vlan=0,macaddr=00:01:02:03:04:aa -net tap,vlan=0,ifname=tap0 -m 128

Assuming that networking with QEMU virtual machine is configured properly, we can use SCP to put on package file:

~/xen$ scp ./kernel-xen-2.6.18-8.1.14.3.cc.i686.rpm root@10.0.0.23:/ The authenticity of host '10.0.0.23 (10.0.0.23)' can't be established. RSA key fingerprint is 70:84:b8:c5:6d:62:37:d1:1e:96:29:d0:77:46:6a:0c. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added '10.0.0.23' (RSA) to the list of known hosts. root@10.0.0.23's password: kernel-xen-2.6.18-8.1.14.3.cc.i686.rpm 100% 16MB 2.0MB/s 00:08

Next, connect to ClarkConnect and install kernel package. Note that this package is not entirely compatible with ClarkConnect 4.2 SP1 system and proper installation fails, but taking into account that the only purpose of installing this package is to get Xen enabled kernel and drivers, forced installation is fine, except that module dependency file must be created manually:

~/xen$ ssh root@10.0.0.23

root@10.0.0.23's password:

Last login: Tue Jun 10 07:20:34 2008

[root@server ~]# cd /

[root@server /]# rpm -i kernel-xen-2.6.18-8.1.14.3.cc.i686.rpm --force --nodeps

Usage: new-kernel-pkg [-v] [--mkinitrd] [--rminitrd]

[--initrdfile=<initrd-image>] [--depmod] [--rmmoddep]

[--kernel-args=<args>] [--banner=<banner>]

[--make-default] <--install | --remove> <kernel-version>

(ex: new-kernel-pkg --mkinitrd --depmod --install 2.4.7-2)

error: %post(kernel-xen-2.6.18-8.1.14.3.cc.i686) scriptlet failed, exit status 1

[root@server /]# ls /boot

config-2.6.18-53.1.13.2.cc initrd-2.6.18-53.1.13.2.cc.img symvers-2.6.18-8.1.14.3.ccxen.gz vmlinuz-2.6.18-53.1.13.2.cc xen-syms-2.6.18-8.1.14.3.cc

config-2.6.18-8.1.14.3.ccxen memtest86+-1.26 System.map-2.6.18-53.1.13.2.cc vmlinuz-2.6.18-8.1.14.3.ccxen

grub symvers-2.6.18-53.1.13.2.cc.gz System.map-2.6.18-8.1.14.3.ccxen xen.gz-2.6.18-8.1.14.3.cc

[root@server /]# depmod -v 2.6.18-8.1.14.3.ccxen -F /boot/System.map-2.6.18-8.1.14.3.ccxen

....

Next, copy out some files from installed system:

- Xen enabled kernel (/boot/vmlinuz-2.6.18-8.1.14.3.ccxen)

- initial ram disk (/boot/initrd-2.6.18-53.1.13.2.cc.img)

~/xen$ scp root@10.0.0.23:/boot/vmlinuz-2.6.18-8.1.14.3.ccxen ./ root@10.0.0.23's password: vmlinuz-2.6.18-8.1.14.3.ccxen 100% 2049KB 2.0MB/s 00:01 ~/xen$ scp root@10.0.0.23:/boot/initrd-2.6.18-53.1.13.2.cc.img ./ root@10.0.0.23's password: initrd-2.6.18-53.1.13.2.cc.img 100% 434KB 433.8KB/s 00:00

Default ClarkConnect installation does not execute login process on Xen virtual console, so in order to have login available on virtual console accessible from RadioOS with "/xen console <VM name>" command, virtual console device should get made inside image (mknod /dev/xvc0 c 204 191).

Default ClarkConnect initial ram disk does not support booting from Xen virtual disk because it does not contain driver for virtual disk. To overcome this problem initial ram disk must be updated.

Updating initrd Manually

One opportunity to make initial ram disk that would support booting from virtual disk is to manually put virtual disk driver in initrd and update it to load this module.

At first we extract contents of initial ram disk that was copied from ClarkConnect image:

~/xen$ file initrd-2.6.18-53.1.13.2.cc.img initrd-2.6.18-53.1.13.2.cc.img: gzip compressed data, from Unix, last modified: Tue Jun 10 14:01:27 2008, max compression ~/xen$ mv initrd-2.6.18-53.1.13.2.cc.img clarkinitrd.gz ~/xen$ gunzip clarkinitrd.gz ~/xen$ file clarkinitrd clarkinitrd: ASCII cpio archive (SVR4 with no CRC) ~/xen$ mkdir initrd ~/xen$ cd initrd/ ~/xen/initrd$ sudo cpio -idv --no-absolute-filenames < ../clarkinitrd . etc bin bin/insmod bin/nash bin/modprobe sysroot sys lib lib/sd_mod.ko lib/libata.ko lib/scsi_mod.ko lib/ata_piix.ko lib/ext3.ko lib/jbd.ko sbin dev dev/console dev/systty dev/tty3 dev/tty2 dev/tty4 dev/ram dev/tty1 dev/null init loopfs proc 1990 blocks ~/xen/initrd$ cat init #!/bin/nash mount -t proc /proc /proc setquiet echo Mounted /proc filesystem echo Mounting sysfs mount -t sysfs none /sys echo "Loading scsi_mod.ko module" insmod /lib/scsi_mod.ko echo "Loading sd_mod.ko module" insmod /lib/sd_mod.ko echo "Loading libata.ko module" insmod /lib/libata.ko echo "Loading ata_piix.ko module" insmod /lib/ata_piix.ko echo "Loading jbd.ko module" insmod /lib/jbd.ko echo "Loading ext3.ko module" insmod /lib/ext3.ko echo Creating block devices mkdevices /dev echo Creating root device mkrootdev /dev/root umount /sys echo Mounting root filesystem mount -o defaults --ro -t ext3 /dev/root /sysroot echo Switching to new root switchroot /sysroot

From the above we see that init script in initrd image loads drivers for SCSI and ATA disks, as well as EXT3 filesystem modules. In order for ClarkConnect to boot under Xen we have to replace hardware drivers with Xen virtual disk driver and EXT3 filesystem modules with appropriate modules for Xen kernel. Take these modules from installed ClarkConnect system:

~/xen/initrd$ cd lib/ ~/xen/initrd/lib$ ls ata_piix.ko ext3.ko jbd.ko libata.ko scsi_mod.ko sd_mod.ko ~/xen/initrd/lib$ sudo rm ata_piix.ko libata.ko scsi_mod.ko sd_mod.k ~/xen/initrd/lib$ scp root@10.0.0.23:/lib/modules/2.6.18-8.1.14.3.ccxen/kernel/fs/jbd/jbd.ko ./ root@10.0.0.23's password: jbd.ko 100% 70KB 69.8KB/s 00:00 ~/xen/initrd/lib$ sudo scp root@10.0.0.23:/lib/modules/2.6.18-8.1.14.3.ccxen/kernel/fs/ext3/ext3.ko ./ root@10.0.0.23's password: ext3.ko 100% 141KB 141.5KB/s 00:00 ~/xen/initrd/lib$ sudo scp root@10.0.0.23:/lib/modules/2.6.18-8.1.14.3.ccxen/kernel/drivers/xen/blkfront/xenblk.ko ./ root@10.0.0.23's password: xenblk.ko 100% 22KB 22.0KB/s 00:00

Next, update init script so that it loads Xen virtual disk driver. Final init script should look like this:

~/xen/initrd$ cat init #!/bin/nash mount -t proc /proc /proc setquiet echo Mounted /proc filesystem echo Mounting sysfs mount -t sysfs none /sys echo "Loading xenblk.ko module" insmod /lib/xenblk.ko echo "Loading jbd.ko module" insmod /lib/jbd.ko echo "Loading ext3.ko module" insmod /lib/ext3.ko echo Creating block devices mkdevices /dev echo Creating root device mkrootdev /dev/root umount /sys echo Mounting root filesystem mount -o defaults --ro -t ext3 /dev/root /sysroot echo Switching to new root switchroot /sysroot

Create initrd file from directory structure with modifications that have been made:

~/xen/initrd$ find * | cpio -o -H newc -O ../clarkinitrd.new ~/xen/initrd$ find . -depth | cpio -ov --format=newc > ../clark.initrd ~/xen/initrd$ cd ../ ~/xen$ gzip -c -9 < clark.initrd > clark.initrd.rgz

Using mkinitrd Utility

Instead of creating initial ram disk manually as described above is possible to use mkinitrd utility available in ClarkConnect distribution. After Xen kernel package is installed as shown above, initial ram disk can be created with command (note that this command must be executed in ClarkConnect, e.g. running in QEMU VM):

[root@server /]# mkinitrd clark.otherinitrd.rgz 2.6.18-8.1.14.3.ccxen --omit-scsi-modules --omit-raid-modules --omit-lvm-modules --with=xenblk

After this, newly created clark.otherinitrd.rgz must be copied from ClarkConnect image.

Adding ClarkConnect VM in RadioOS

Finally upload files (dont forget to shut down QEMU that executes image) to host RadioOS and create guest VM entry:

[admin@CableFree] /xen> print detail

Flags: X - disabled

1 X name="clark" disk=hda disk-image="clark.img" initrd="clark.otherinitrd.rgz" kernel="vmlinuz-2.6.18-8.1.14.3.ccxen"

kernel-cmdline="root=/dev/hda1" cpu-count=1 memory=128 weight=256 console-telnet-port=64000 state=disabled

Note that VM is configured with files that were made in previous steps. Also pay attention to "kernel-cmdline" parameter that is supplied. This instructs ClarkConnect where its root file system is - as we are providing ClarkConnect image with "disk=hda", and during installation root filesystem was made as first partition in image, root file system is on device /dev/hda1.

On first boot of ClarkConnect, it will detect changes in hardware and also enable login on virtual console device.

Example: Preparing Centos 5.1 Image

CentOS ir RedHat Linux based Linux distribution. Distribution includes necessary software packages for virtualization support, therefore installing CentOS image that supports virtualization is rather simple.

Installing CentOS 5.1

To create example CentOS image, we use QEMU for CentOS installation the same way as in previous ClarkConnect example.

Create image file and start QEMU with CentOS netinstall ISO image:

~/xen$ qemu-img create centos.img 2Gb Formatting 'centos.img', fmt=raw, size=2097152 kB ~/xen$ sudo qemu -hda centos.img -cdrom=CentOS-5.1-i386-netinstall.iso -net nic,vlan=0,macaddr=00:01:02:03:04:aa -net tap,vlan=0,ifname=tap0 -m 256

Note that netinstall ISO image is used - sofware packages will be downloaded from network. This means that network connectivity of QEMU VM must be configured and running.

During installation follow partition scheme as in previous example for ClarkConnect. Example image is created with partition scheme as can be seen in image:

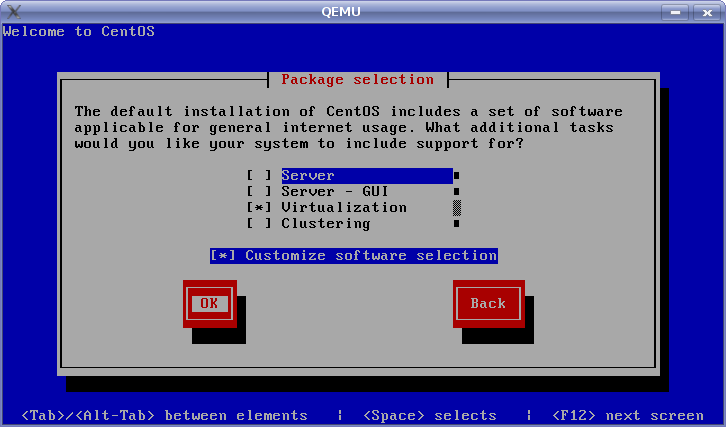

Also during installation select "Virtualization" sofware set:

When installation is complete, CentOS image does not boot under QEMU emulator because it does not support running Xen hypervisor. Nevertheless this does not matter, because all necessary sofware for running as guest is already installed in image. Still this forces to take different approach for extracting necessary files from image (for ClarkConnect this got done by connecting to VM running under QEMU and copying files out).

Preparing Initial Ram Disk

To take Xen kernel from CentOS image and to prepare initrd (that would include driver for virtual disk), use the following steps.

Mount root partition of image using loopback device (note that 1st partition in image starts with sector 63 therefore we use offset in image file to point to beginning of partition:

# mount centos.img /mnt -o loop,offset=$[512*63]

Next, copy out kernel file:

# cp /mnt/boot/vmlinuz-2.6.18-53.el5xen ./

To prepear initrd file we use mkinitrd tool. To force it to work on mounted image, use chroot command:

# chroot /mnt /bin/sh sh-3.1# mkinitrd centos.initrd.rgz 2.6.18-53.el5xen --omit-scsi-modules --omit-raid-modules --omit-lvm-modules --with=xenblk sh-3.1# exit

Adding CentOS VM in RadioOS

Now files to be used for running guest VM (kernel, newly made initrd and image) have to be put on RadioOS and appropriate VM entry should be made:

[admin@CableFree] /xen> print detail

Flags: X - disabled

0 X name="centos" disk=hda disk-image="centos.img" initrd="centos.initrd.rgz" kernel="vmlinuz-2.6.18-53.el5xen"

kernel-cmdline="ro root=/dev/hda1" cpu-count=1 memory=256 weight=256 console-telnet-port=64000 state=disabled

Notice that CentOS kernel is also passed arguments of which partition should be used for root file system, similar to ClarkConnect.

Adding Virtualization Support to Your Favourite Linux Based OS

To be able to run your favourite OS/distribution in guest VM, it must support Xen DomU, therefore enabling Xen support most likely will involve recompiling kernel. Disk and virtual network interface devices have to be accessed by Xen netfront and blockfront drivers, therefore you should make sure that these drivers are included in your system, either directly in kernel or as modules. Kernel must be compiled with PAE support.

Depending on Linux kernel that your distribution uses, it is possible that kernel source does not have support for Xen. This may mean that patching of kernel is necessary. In such cases you can refer to distributions that use similar kernel version and have vendor patches for Xen support.

... Some time later maybe example will come...